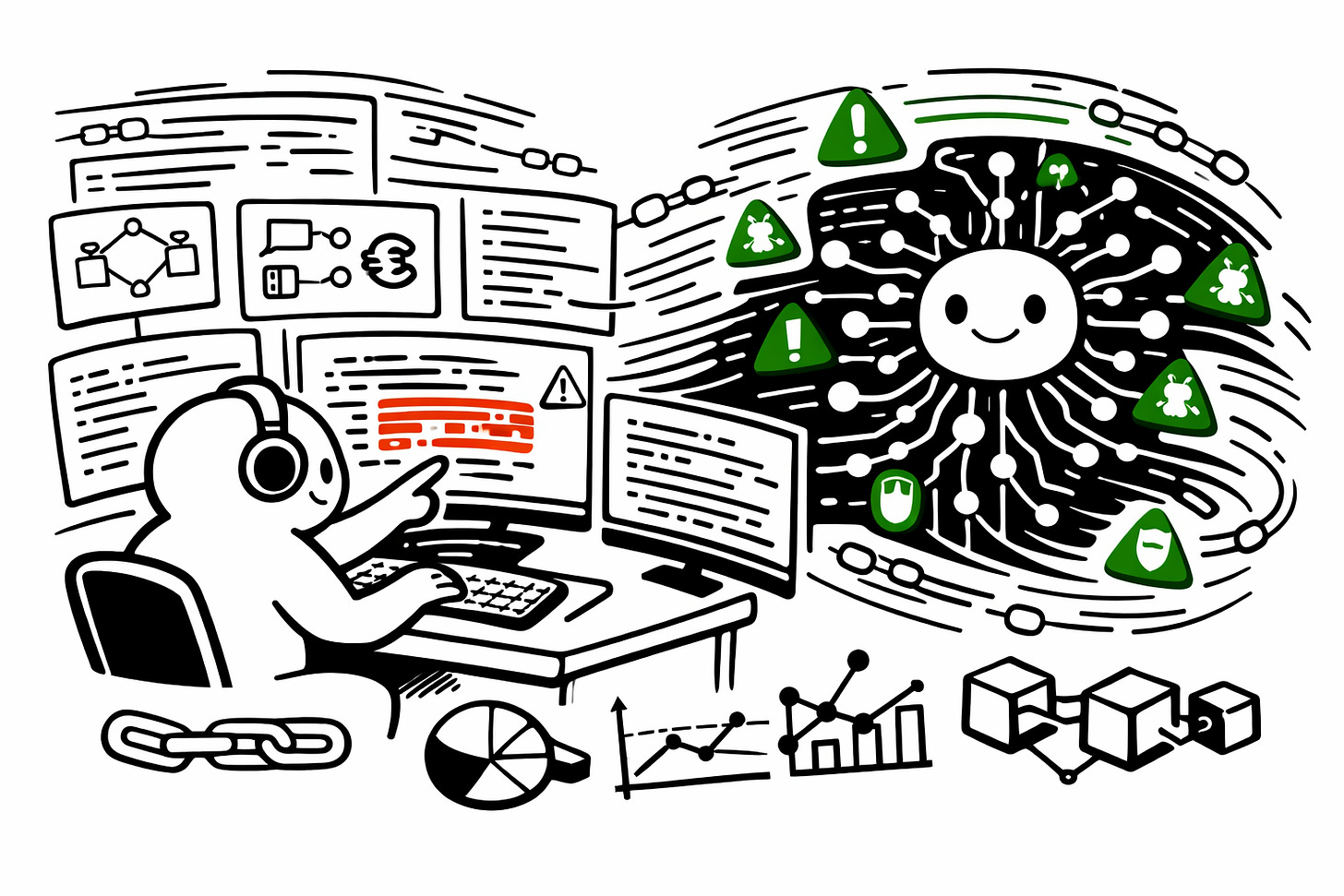

AI is transforming smart contract audits, but it will not replace human auditors

AI is reshaping software development, and Web3 isn’t an exception. Developers are writing smart contracts faster than ever, using AI. Some developers are now 5–10× more productive thanks to AI. That speed is exciting, but it also brings new risks.

The vibe coding trend

We increasingly see AI-generated (“Vibe-coded”) code that:

Is complex, but the team doesn’t fully understand

Has “perfect” test coverage that checks nothing meaningful

Misses invariants and edge cases

Feels bloated without functional purpose

More code. Less understanding. This complicates security reviews.

AI can audit code, but its capabilities are limited.

Although single shot prompting of ChatGPT and other LLMs are able to detect vulnerabilities, the usual outcomes are:

~40% precision → 6 out of 10 findings are false positives.

~40% recall → misses 6 out of 10 real vulnerabilities.

Better setups, multi-agent or ML-based, can reach >90% precision and recall.

AI is excellent at identifying known patterns but poor at new or complex logic.

Example: a model flagged a reentrancy bug but missed a cross-chain MEV exploit nearby.

AI only detects what’s in its training data; novel exploits need human insight. Similarly, AI today is excellent at routine, recognisable vulnerabilities, but not complex, protocol-level logic that requires economics, game theory, or multi-protocol reasoning.

How we use AI at Oak Security

AI is already a core part of our workflow:

Detecting common bugs

Summarising large codebases

Automating fuzzing setups

Generating proof of concepts

Speeding up documentation and reporting

Eliminating repetitive tasks

AI handles the noise, while humans focus on novel and critical vulnerabilities. It doesn’t replace auditors; it supercharges them.

How to use AI safely in smart contract audits

1. Use AI for high-coverage, low-risk tasks.

Pattern detection

Code summarisation

Auto-documentation

Test generation

Fuzzing scaffolds

2. Keep humans in the loop for anything requiring reasoning.

Protocol logic review

Economic/game-theoretic analysis

Cross-chain interactions

Attack-surface mapping

Novel exploit discovery

3. Never trust a single AI output.

Run multi-agent or ensemble models where possible

Validate everything manually

Treat AI findings as suggestions, not truths

4. Protect your private code.

Use local open-source models

Avoid sending sensitive code to cloud LLMs

Keep logs, prompts, and outputs internally

5. Combine three pillars of security

AI for speed and coverage

Human auditors for complexity, creativity, and novel exploits

A zero-trust security mindset across the entire team

Equip auditors; don’t replace them. That’s how we build safer protocols and a safer ecosystem.

The future: humans × AI.

AI is best at patterns, boilerplate, and speed. Humans excel at economic reasoning, game theory, complex logic, and novel exploits.

Together, they’re stronger than either alone.

Want to Level Up Your Team’s OpSec?

We run a free Web3 Security Awareness Training for technical and non-technical teams. Register.

It covers:

Team behaviour

Key management

Phishing and device compromise

Practical ways to avoid exploits

Get a quote for your project, schedule a call with our team, follow us on X, and sign up for our newsletter for simplified and curated Web3 security insights.